Read From Bigquery Apache Beam

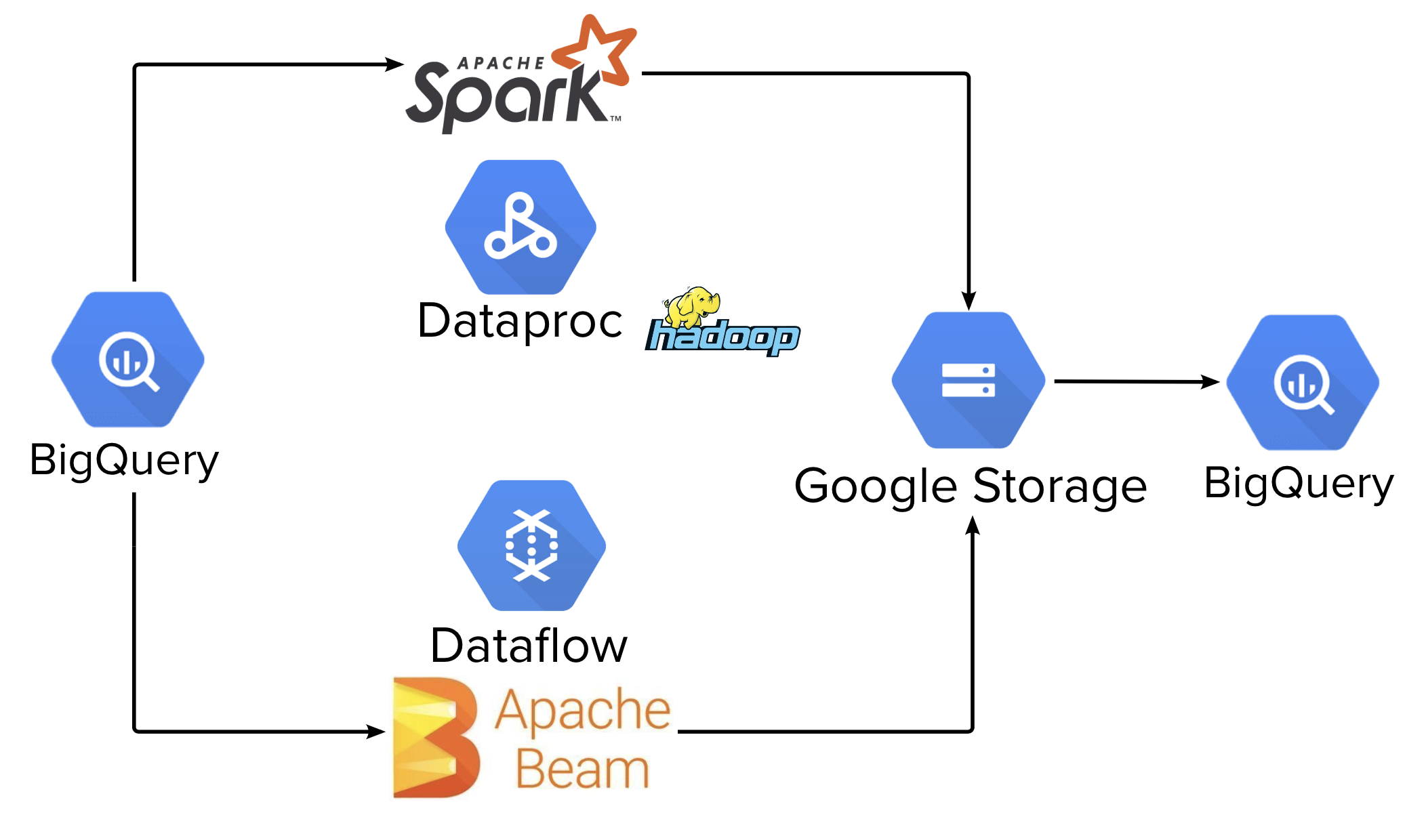

Read From Bigquery Apache Beam - See the glossary for definitions. I have a gcs bucket from which i'm trying to read about 200k files and then write them to bigquery. Web read files from multiple folders in apache beam and map outputs to filenames. Web the runner may use some caching techniques to share the side inputs between calls in order to avoid excessive reading::: Union[str, apache_beam.options.value_provider.valueprovider] = none, validate: To read data from bigquery. In this blog we will. 5 minutes ever thought how to read from a table in gcp bigquery and perform some aggregation on it and finally writing the output in another table using beam pipeline? Working on reading files from multiple folders and then output the file contents with the file name like (filecontents, filename) to bigquery in apache beam. I initially started off the journey with the apache beam solution for bigquery via its google bigquery i/o connector.

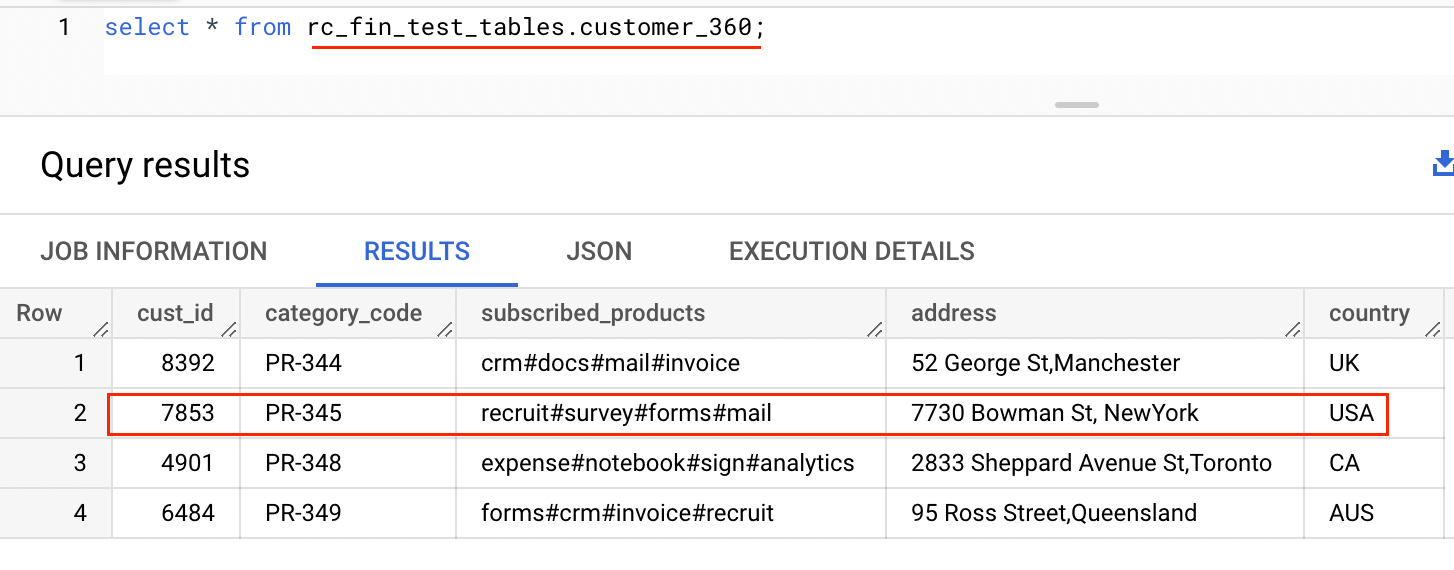

A bigquery table or a query must be specified with beam.io.gcp.bigquery.readfrombigquery Working on reading files from multiple folders and then output the file contents with the file name like (filecontents, filename) to bigquery in apache beam. Can anyone please help me with my sample code below which tries to read json data using apache beam: The following graphs show various metrics when reading from and writing to bigquery. This is done for more convenient programming. Web for example, beam.io.read(beam.io.bigquerysource(table_spec)). Web this tutorial uses the pub/sub topic to bigquery template to create and run a dataflow template job using the google cloud console or google cloud cli. I am new to apache beam. The structure around apache beam pipeline syntax in python. To read data from bigquery.

The following graphs show various metrics when reading from and writing to bigquery. Working on reading files from multiple folders and then output the file contents with the file name like (filecontents, filename) to bigquery in apache beam. To read an entire bigquery table, use the table parameter with the bigquery table. When i learned that spotify data engineers use apache beam in scala for most of their pipeline jobs, i thought it would work for my pipelines. Web i'm trying to set up an apache beam pipeline that reads from kafka and writes to bigquery using apache beam. Web apache beam bigquery python i/o. Web this tutorial uses the pub/sub topic to bigquery template to create and run a dataflow template job using the google cloud console or google cloud cli. Read what is the estimated cost to read from bigquery? Web read csv and write to bigquery from apache beam. To read an entire bigquery table, use the from method with a bigquery table name.

Apache Beam rozpocznij przygodę z Big Data Analityk.edu.pl

To read data from bigquery. Public abstract static class bigqueryio.read extends ptransform < pbegin, pcollection < tablerow >>. Web i'm trying to set up an apache beam pipeline that reads from kafka and writes to bigquery using apache beam. Web the runner may use some caching techniques to share the side inputs between calls in order to avoid excessive reading:::.

Apache Beam介绍

Web read files from multiple folders in apache beam and map outputs to filenames. This is done for more convenient programming. In this blog we will. Web using apache beam gcp dataflowrunner to write to bigquery (python) 1 valueerror: As per our requirement i need to pass a json file containing five to 10 json records as input and read.

Google Cloud Blog News, Features and Announcements

Can anyone please help me with my sample code below which tries to read json data using apache beam: The problem is that i'm having trouble. How to output the data from apache beam to google bigquery. To read an entire bigquery table, use the table parameter with the bigquery table. Similarly a write transform to a bigquerysink accepts pcollections.

How to submit a BigQuery job using Google Cloud Dataflow/Apache Beam?

Web using apache beam gcp dataflowrunner to write to bigquery (python) 1 valueerror: The structure around apache beam pipeline syntax in python. Web the default mode is to return table rows read from a bigquery source as dictionaries. Web the runner may use some caching techniques to share the side inputs between calls in order to avoid excessive reading::: Public.

Apache Beam Explained in 12 Minutes YouTube

How to output the data from apache beam to google bigquery. Working on reading files from multiple folders and then output the file contents with the file name like (filecontents, filename) to bigquery in apache beam. Can anyone please help me with my sample code below which tries to read json data using apache beam: Web this tutorial uses the.

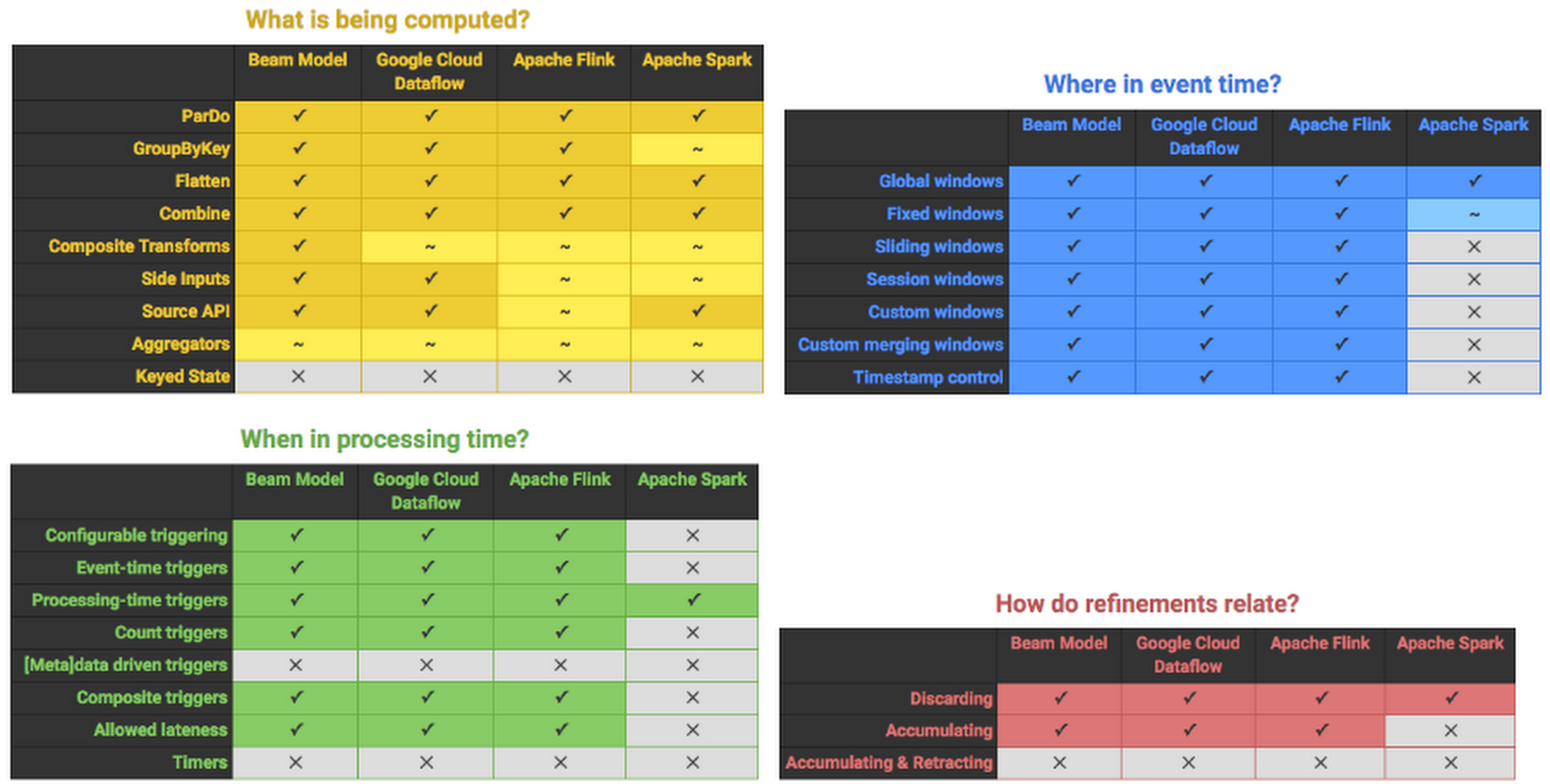

One task — two solutions Apache Spark or Apache Beam? · allegro.tech

I initially started off the journey with the apache beam solution for bigquery via its google bigquery i/o connector. How to output the data from apache beam to google bigquery. This is done for more convenient programming. Web read csv and write to bigquery from apache beam. 5 minutes ever thought how to read from a table in gcp bigquery.

How to setup Apache Beam notebooks for development in GCP

How to output the data from apache beam to google bigquery. Read what is the estimated cost to read from bigquery? Web read files from multiple folders in apache beam and map outputs to filenames. Union[str, apache_beam.options.value_provider.valueprovider] = none, validate: 5 minutes ever thought how to read from a table in gcp bigquery and perform some aggregation on it and.

GitHub jo8937/apachebeamdataflowpythonbigquerygeoipbatch

I have a gcs bucket from which i'm trying to read about 200k files and then write them to bigquery. Web for example, beam.io.read(beam.io.bigquerysource(table_spec)). Read what is the estimated cost to read from bigquery? To read an entire bigquery table, use the table parameter with the bigquery table. I am new to apache beam.

Apache Beam Tutorial Part 1 Intro YouTube

Web read files from multiple folders in apache beam and map outputs to filenames. Web in this article you will learn: Web the default mode is to return table rows read from a bigquery source as dictionaries. To read an entire bigquery table, use the table parameter with the bigquery table. Working on reading files from multiple folders and then.

Apache Beam チュートリアル公式文書を柔らかく煮込んでみた│YUUKOU's 経験値

Main_table = pipeline | 'verybig' >> beam.io.readfrobigquery(.) side_table =. I'm using the logic from here to filter out some coordinates: Working on reading files from multiple folders and then output the file contents with the file name like (filecontents, filename) to bigquery in apache beam. Web using apache beam gcp dataflowrunner to write to bigquery (python) 1 valueerror: Web i'm.

To Read An Entire Bigquery Table, Use The Table Parameter With The Bigquery Table.

Web read files from multiple folders in apache beam and map outputs to filenames. Main_table = pipeline | 'verybig' >> beam.io.readfrobigquery(.) side_table =. Public abstract static class bigqueryio.read extends ptransform < pbegin, pcollection < tablerow >>. 5 minutes ever thought how to read from a table in gcp bigquery and perform some aggregation on it and finally writing the output in another table using beam pipeline?

Web The Default Mode Is To Return Table Rows Read From A Bigquery Source As Dictionaries.

Web the runner may use some caching techniques to share the side inputs between calls in order to avoid excessive reading::: To read an entire bigquery table, use the from method with a bigquery table name. Web in this article you will learn: A bigquery table or a query must be specified with beam.io.gcp.bigquery.readfrombigquery

I'm Using The Logic From Here To Filter Out Some Coordinates:

The problem is that i'm having trouble. This is done for more convenient programming. The following graphs show various metrics when reading from and writing to bigquery. Web this tutorial uses the pub/sub topic to bigquery template to create and run a dataflow template job using the google cloud console or google cloud cli.

Web Using Apache Beam Gcp Dataflowrunner To Write To Bigquery (Python) 1 Valueerror:

Web apache beam bigquery python i/o. How to output the data from apache beam to google bigquery. Working on reading files from multiple folders and then output the file contents with the file name like (filecontents, filename) to bigquery in apache beam. Web i'm trying to set up an apache beam pipeline that reads from kafka and writes to bigquery using apache beam.